Social media platforms are cracking down on fake news and misinformation on their platforms.

According to forbes.com, Facebook spreads fake news the fastest, and is taking measures to preventing fake news.

Facebook uses third-party fact checking organizations, which are International Fact-Checking Network (IFCN) certified fact checkers that helps stop the spread of misinformation at a faster rate, as well as making it easier for users to report fake news. When the post is flagged as fake news, it shows up on the post making the reader aware of the information they are looking at before believing it’s true.

Another initiative Facebook has implemented to cut down on fake news is disrupting financial incentives for scammers and eliminating spoofing of well-known news organizations. For consumers this means less spam pages have access to purchase ads that share fake news.

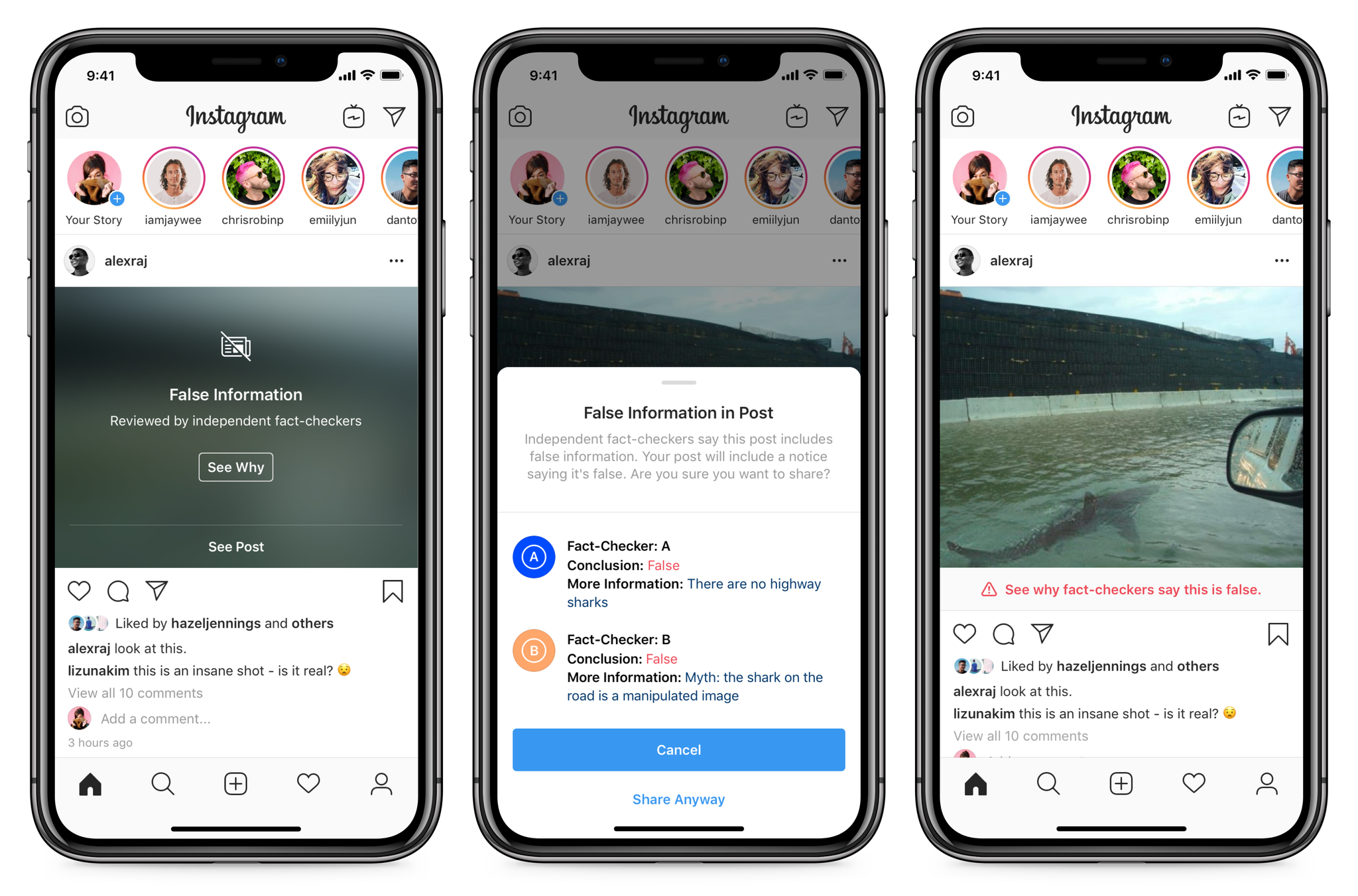

According to Instagram, Facebook recently expanded its 45 global third party fact-checking networks to Instagram to help review and identify false information.

As stated on Instagram’s blog on combating misinformation, if the third party fact checking organization finds information posted is false, a few things happen including:

• The photo or video is removed from the explore page and hidden from hashtags used.

• Photo is labeled “False Information” and has an overlay that covers the picture.

• A warning is given to the account that posted the false information, that they have shared false information.

• A label is placed under the post giving the audience information on where to fact check.

Twitter has gone a similar route as Instagram by adding a label under the flagged post giving the readers a twitter curated page or a trusted external source containing additional information.

Twitter employees Yoel Roth and Nick Pickles explained the different levels of posting misinformation. Depending on the severity of the post, the label is applied to the bottom of the tweet. If it’s something that’s severe, the post is greyed out and gives the user the option to view the post or learn more about why the post is being flagged.

Claims on twitter are categorized into three broad categories: Misleading information — statements that have been confirmed false by experts, Disputed claims — statements do not have a source and can not be proven true, and unverified claims — statements that could be true or false but is unconfirmed at the time of posting.

According to help.twitter.com, twitter recently put out their civic integrity policy, which outlines the harms of people using Twitter to manipulate others when it comes to political elections, participating in the census and ballot initiatives. Violation of the civil integrity policy can lead to profile modification, tweet removal, labeling of a tweet, and repeated offenses will have their accounts suspended.

Reddit has the least amount of protection to its community when it comes to fake news… It is a blog site after all. Reddit relies on its users to report and call out fake news.

As stated on its policies and features page, “Users on Reddit tend to call things like they see it.”

Reddit’s users have the option to down vote the post and moderators are able to blacklist certain sources from commenting on their posts.

Reddit suggests on a blog post that its users should post links that can back up their claims to avoid misinformation being spread across their platform. The process of reporting misinformation or fake news has become more accessible to it’s users by flagging the comment or post as spam and typing “this is misinformation” in the comments, and the information will be sent directly to reddit in the same manner as spam to be processed.